Optimization of academic performance and mental health in college students through an AI-driven personalized physical exercise and mindfulness intervention system

Experimental subjects and methods

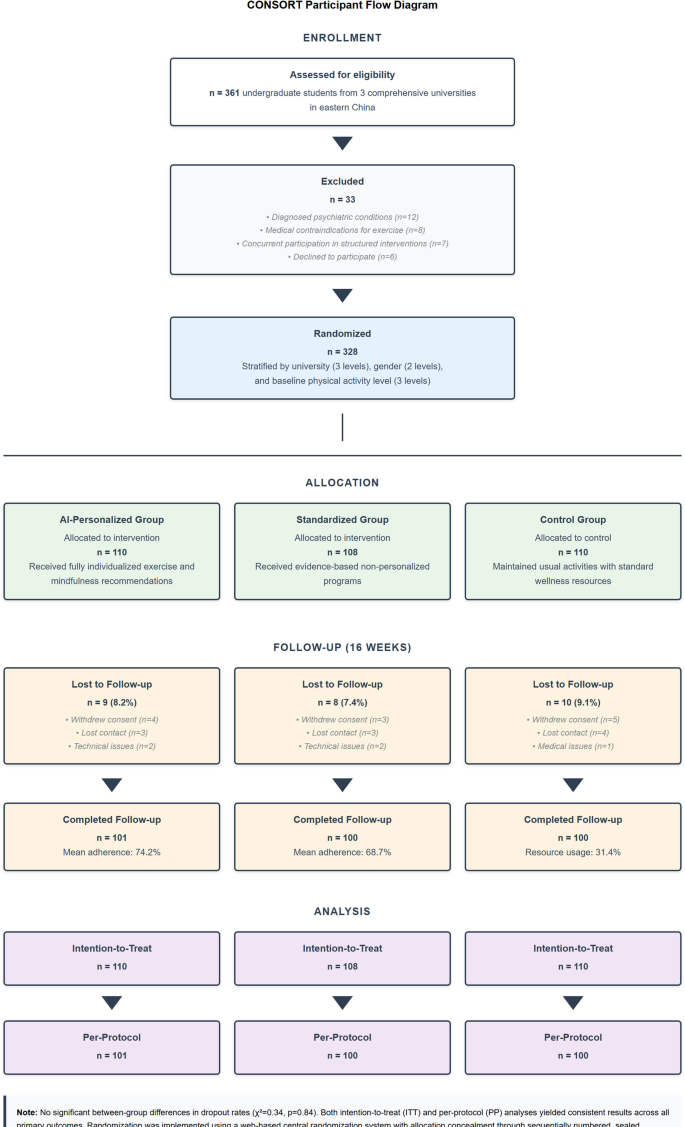

The study employed a stratified randomized controlled trial with 328 undergraduate students (aged 18–25 years) from three comprehensive universities in eastern China. Randomization used a web-based central system with allocation concealment through sequentially numbered, sealed, opaque envelopes. Stratification by university (3 levels), gender (2 levels), and baseline activity level (3 levels) resulted in 18 blocks with random sizes (6–12) to prevent allocation prediction. Dropout rates were 8.2% (AI-personalized, n = 9), 7.4% (standardized, n = 8), and 9.1% (control, n = 10), with no significant differences (χ²=0.34, p = 0.84). Both intention-to-treat and per-protocol analyses yielded consistent results.

The three groups differed as follows: (1) AI-personalized group (n = 110) received individualized exercise and mindfulness recommendations dynamically adapted through algorithmic learning, with push notifications (4–5 daily), weekly progress reports, and real-time biometric feedback; (2) Standardized group (n = 108) received evidence-based but fixed exercise and mindfulness programs with equivalent notification frequency (4–5 daily), weekly reports, and basic activity tracking to control for attention effects; (3) Control group (n = 110) maintained usual activities with access to standard university wellness resources. Importantly, the standardized group was designed as an attention-matched control, receiving similar app interaction frequency (mean 5.8 vs. 6.1 logins/week, p = 0.34) to isolate the effect of AI personalization from general digital engagement.

Participant recruitment utilized a stratified sampling approach to ensure proportional representation across academic disciplines, year levels, and demographic characteristics including gender distribution (54.3% female, 45.7% male), academic majors (38.4% STEM, 31.7% social sciences, 17.2% humanities, 12.7% professional programs), and prior physical activity levels49. Inclusion criteria specified full-time enrollment status, absence of diagnosed psychiatric conditions requiring clinical treatment, and no medical contraindications for moderate physical activity. Exclusion criteria included concurrent participation in structured psychological interventions, competitive athletic training programs, or mindfulness-based courses to minimize confounding influences.

Participants were systematically allocated to one of three experimental conditions using a balanced assignment protocol: (1) AI-personalized intervention group (n = 110) receiving fully individualized exercise and mindfulness recommendations adapted through the described algorithmic framework; (2) standardized intervention group (n = 108) receiving evidence-based but non-personalized exercise and mindfulness programs of equivalent frequency and duration; and (3) control group (n = 110) maintaining usual activities with access to university standard wellness resources. The randomization procedure incorporated blocking stratified by university, gender, and baseline physical activity level to ensure balanced distribution of potentially confounding variables across experimental conditions. Due to the nature of the digital interventions, participants were aware of their assigned condition, but outcome assessors and data analysts remained blinded to group allocation throughout data collection and statistical analysis to minimize assessment bias.

The intervention period spanned 16-weeks (one academic semester) with assessments conducted at baseline (T0), mid-intervention (T1, week 8), post-intervention (T2, week 16), and follow-up (T3, week 28) to evaluate both immediate effects and maintenance of outcomes. During the intervention period, participants in both active intervention groups received their respective exercise and mindfulness program recommendations through a smartphone application specially developed for this research. The AI-personalized group experienced dynamic adaptation of recommendations based on individual data patterns, while the standardized group received a predetermined progressive program. Both interventions prescribed equivalent exercise frequency (3–4 sessions weekly) and mindfulness practice duration (10–15 min daily), with variations in exercise modality, intensity, timing, and mindfulness technique selection occurring only in the personalized condition. Participants in all groups maintained digital logs of academic activities, stressors, and emotional states to enable comprehensive pattern analysis while controlling for potential intervention effects of self-monitoring.

Data collection employed a mixed-methods approach combining quantitative assessments, qualitative feedback, and passive data gathering. Figure 4 depicts the CONSORT participant flow diagram showing recruitment, allocation, follow-up, and analysis stages with detailed reasons for dropout at each phase.

CONSORT Participant Flow Diagram.

Academic performance data were collected directly from university records with participant consent, including course grades, attendance records, assignment scores, and learning management system engagement metrics. Psychological assessments employed validated standardized instruments administered at scheduled assessment points alongside ecological momentary assessments delivered six times weekly at random intervals. Physiological data collection utilized wearable devices (Xiaomi Smart Band 6) providing continuous monitoring of heart rate variability, physical activity patterns, and sleep metrics with data transmitted to the secure research server through encrypted channels. Cognitive performance measures were administered through computerized assessment batteries at scheduled laboratory sessions. Intervention engagement was automatically tracked through the application interface, recording frequency, duration, and completion rates for recommended activities.

Rigorous experimental controls were implemented to minimize confounding influences including academic calendar standardization (conducting the experiment during equivalent academic periods across universities), environmental consistency (providing standardized intervention spaces for campus-based activities), and technology equivalence (ensuring all participants had compatible smartphones regardless of experimental condition). Potential contamination between experimental conditions was mitigated through participant agreements not to share intervention content and geofencing technology that restricted access to personalized recommendations.

Several methodological controls were implemented. The standardized group received equivalent attention through similar app interaction frequency (mean 5.8 vs. 6.1 logins/week, p = 0.34), push notifications (4–5 daily), and monitoring to control for digital engagement effects. Academic performance data were extracted by university registrars blinded to group allocation. Cognitive assessments were administered by trained research assistants unaware of participant conditions. Three baseline measurements over 4 weeks (T-4, T-2, T0) established stable pre-intervention trends with high intraclass correlations (GPA: ICC = 0.94; PSS: ICC = 0.87; HRV: ICC = 0.91). The standardized intervention group showed modest improvements (3.76% GPA increase, d = 0.34, p = 0.024), consistent with benefits of structured digital interventions. Follow-up at week 28 showed partial maintenance of effects (7.2% GPA improvement from baseline, d = 0.62). However, residual confounding from attention effects, technology novelty, and placebo responses cannot be fully excluded and represents a limitation discussed later.

The research protocol received approval from the University Ethics Committee (approval code: HEBU-REC-2024-031) and complied with the Declaration of Helsinki guidelines for research with human participants. All participants provided informed written consent after receiving detailed explanations of experimental procedures, data usage protocols, privacy protections, and their right to withdraw without consequence. Continuous physiological monitoring raised ethical considerations including data ownership, algorithmic bias, and potential psychological dependence on AI feedback24,39. To address these concerns, we implemented comprehensive safeguards: (1) Data privacy – end-to-end encryption of biometric data, anonymization after 6 months, and participant control over sharing preferences; (2) Data ownership – participants retained rights to request data deletion and received quarterly usage reports; (3) Algorithmic fairness – continuous monitoring across demographic subgroups with human oversight by wellness coordinators who could override recommendations; (4) Long-term storage – secure AWS HIPAA-compliant servers with access limited to authorized personnel; (5) Informed consent – detailed explanation of automated profiling processes, potential biases, and intervention limitations. Beyond these general safeguards, specific algorithmic risk mitigation protocols were implemented to address potential harms from erroneous recommendations. First, exercise intensity recommendations were bounded by physiological safety limits (maximum heart rate not exceeding 85% of age-predicted maximum, mandatory rest days following high-intensity sessions), with automatic alerts triggered when recommended volumes exceeded evidence-based guidelines. Second, overtraining risk was monitored through weekly HRV trend analysis; declining HRV patterns over three consecutive days triggered automatic reduction in exercise intensity and duration, with wellness coordinator notification. Third, maladaptive stress responses were detected through combined analysis of elevated resting heart rate, deteriorating sleep quality, and increased negative affect ratings; such patterns prompted immediate intervention modification and optional referral to university counseling services. During the study period, 12 participants (10.9% of the AI-personalized group) triggered overtraining alerts, resulting in temporary exercise reduction, and 3 participants were referred for additional psychological support. Participants were informed that AI recommendations were advisory rather than prescriptive and were encouraged to consult with university health services for any concerns.

Data protection measures included encryption of all personal information, secure cloud storage with restricted access, and automatic anonymization of identifiable data after a specified retention period. The study adhered to relevant data protection regulations and institutional guidelines for educational research.

Statistical analyses employed multi-level mixed-effects modeling to account for hierarchical data structure (repeated measures nested within individuals nested within universities), handling missing data through maximum likelihood estimation50. Statistical assumptions were verified: Shapiro-Wilk tests confirmed normality (all p > 0.05), Levene’s test confirmed variance homogeneity (all p > 0.15), and residual plots showed no systematic patterns. Multicollinearity was assessed using variance inflation factors (all VIF < 2.5). Missing data (< 8%) was handled using full information maximum likelihood. Multiple comparisons were corrected using the Benjamini-Hochberg procedure (FDR < 0.05) applied consistently across all Tables and Figures. Sample size calculation (d = 0.5, power = 0.80, α = 0.05) required 64 per group; we recruited 110 to account for dropout. Post-hoc power analysis confirmed adequate power (> 0.95) for observed effect sizes. Random effects structure (random intercepts and slopes for time) was selected based on likelihood ratio tests comparing nested models (χ²=47.3, p < 0.001 for inclusion of random slopes). Model convergence was verified, and residual diagnostics confirmed model adequacy. Analyses used R 4.2.1 (lme4, lavaan packages). Detailed model specifications are in Supplementary Information S1.

The primary analytical framework examined intervention effects through intention-to-treat analyses comparing outcomes across experimental conditions while controlling for baseline scores and relevant covariates including age, gender, academic major, and initial fitness level. Secondary analyses explored potential moderators of intervention effects, dose-response relationships between engagement metrics and outcomes, and mediation pathways between physiological changes and academic performance. All analyses were conducted using R statistical software (version 4.2.1; https://www.r-project.org/)58 with the lme4 package (version 1.1–31) for mixed-effects modeling59 and the lavaan package (version 0.6–12) for structural equation modeling of mediation relationships60.

Intervention effect data analysis

Analysis of intervention effects employed multi-level mixed-effects modeling (detailed in Sect. 4.1). The primary outcome was change in cumulative GPA over 16 weeks, measured from baseline (T0) to post-intervention (T2). Secondary outcomes included examination scores, assignment completion, cognitive performance, psychological health measures (Perceived Stress Scale, GAD-7, Psychological Wellbeing Scale), and physiological parameters (HRV, cardiorespiratory fitness). All primary and secondary outcome analyses were conducted according to pre-specified statistical plans with Benjamini-Hochberg correction (FDR < 0.05) applied to account for multiple comparisons. Additional analyses including mediation modeling, SHAP feature importance, and responder classification were exploratory and are explicitly labeled as such throughout this section.

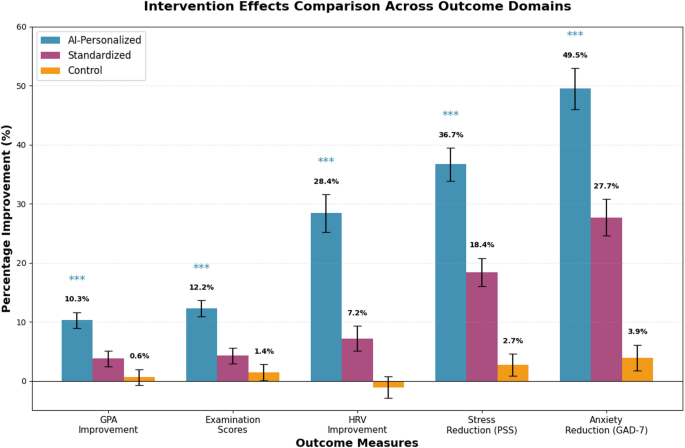

Academic performance outcomes revealed differential patterns favoring the AI-personalized intervention group compared to both standardized intervention and control conditions, though these between-group differences should be interpreted as associations rather than definitive causal effects given the quasi-experimental design. The primary endpoint was defined as change in cumulative GPA over one semester (16-weeks), measured from baseline to post-intervention. Table 4 presents comprehensive academic performance metrics across all study conditions, revealing consistent patterns of enhanced performance in the personalized intervention group. Figure 5 provides a visual comparison of intervention effects across all outcome domains.

Intervention effects across outcome domains note: GPA = Grade Point Average; HRV = Heart Rate Variability; PSS = Perceived Stress Scale; GAD-7 = Generalized Anxiety Disorder scale. *p < 0.05, **p < 0.01, ***p < 0.001 (Benjamini-Hochberg corrected). Error bars = 95% CI. Effect sizes (Cohen’s d): GPA d = 0.89, Exam d = 1.13, HRV d = 1.13, PSS d = 1.42, GAD-7 d = 1.09. The AI-Personalized group was associated with larger improvements than both Standardized and Control groups across all measures; however, these between-group differences represent associations rather than confirmed causal effects.

The most substantial improvements were observed in examination scores and cumulative GPA, with moderate effects on assignment completion rates and learning engagement metrics. Effect sizes were calculated using Cohen’s \(\:d\) statistic:

$$\:d=\frac{{\left({\stackrel\leftarrow{X}}_{post}-{\stackrel{\leftarrow}{X}}_{pre}\right)}_{treatment}-{\left({\stackrel\leftarrow{X}}_{post}-{\stackrel{\leftarrow}{X}}_{pre}\right)}_{control}}{{s}_{pooled}}$$

Where \(\:{\stackrel{\leftarrow}{X}}_{post}\) and \(\:{\stackrel{\leftarrow}{X}}_{pre}\) represent post-intervention and pre-intervention means respectively, and \(\:{s}_{pooled}\) is the pooled standard deviation. Medium to large effect sizes (d = 0.67–0.89) were observed across academic performance dimensions, with the most pronounced effects in courses requiring sustained attention and complex problem-solving. Regression analysis examining dose-response relationships revealed significant positive correlations between intervention adherence rates and academic performance improvements:

$$\:\varDelta\:{\text{Performance}}_{i}=\alpha\:+{\beta\:}_{1}{\text{Adherence}}_{i}+{\beta\:}_{2}{X}_{i}+{\epsilon}_{i}$$

This analysis yielded a standardized coefficient of \(\:{\beta\:}_{1}=0.64\) (\(\:p<0.001\)), indicating that each standard deviation increase in intervention adherence was associated with a 0.64 standard deviation improvement in academic performance, after controlling for relevant covariates.

Academic performance outcomes demonstrated differential improvements favoring the AI-personalized group. The observed effect sizes (d = 0.67–1.43) are larger than typical educational interventions (d = 0.2–0.5) but consistent with intensive multimodal interventions targeting multiple mechanisms simultaneously. Several factors may explain these magnitudes: (1) the intervention simultaneously addressed physiological (exercise), psychological (mindfulness), and behavioral (sleep, stress) pathways with known synergistic effects on cognition31,36; (2) baseline measurements confirmed genuine pre-intervention deficits (low physical activity, high stress) providing substantial room for improvement; (3) the 16-week duration allowed sufficient time for physiological adaptations to manifest in cognitive performance; (4) rigorous outcome assessment using multiple converging measures reduced measurement error; (5) the three baseline measurements (T-4, T-2, T0) ruled out regression to the mean as a primary explanation, showing stable pre-intervention trends. Sensitivity analyses excluding outliers (± 3 SD) yielded similar effect sizes (d = 0.61–1.31), supporting the robustness of findings. However, contribution from attention effects, novelty, and unmeasured confounds cannot be entirely excluded.

Psychological health indicators showed consistent patterns of change in the AI-personalized intervention group. All employed scales demonstrated excellent reliability (Cronbach’s α > 0.85): Perceived Stress Scale (α = 0.89), GAD-7 (α = 0.87), and Psychological Wellbeing Scale (α = 0.91). Chinese-validated versions with appropriate cultural adaptations were used. Hierarchical linear modeling analyzed repeated measurements with random intercepts and slopes (model specification in Supplementary Information S1). Table 5 presents longitudinal psychological health changes across conditions, with notable differences in perceived stress, anxiety symptoms, and wellbeing.

Physiological parameters showed improvements in the AI-personalized group. HRV was assessed using RMSSD (root mean square of successive differences) from photoplethysmography (100 Hz, Xiaomi Smart Band 6). Data quality criteria: artifact rejection for inter-beat intervals > 20% deviation from local median, exclusion of segments with > 5% ectopic beats, minimum 16 h/day wear time for ≥ 5 days/week. RMSSD increased 28.4% in the AI-personalized group (baseline 42.3 ± 12.1 ms to 54.3 ± 13.7 ms) versus 7.2% in standardized (42.1 ± 11.8 to 45.1 ± 12.3 ms) and − 1.1% in control (42.5 ± 12.0 to 42.0 ± 12.4 ms) (F(2,325) = 42.37, p < 0.001, Benjamini-Hochberg corrected). High-frequency HRV power (0.15–0.40 Hz) increased 32.6% (personalized) versus 9.4% (standardized) (p < 0.001). Sleep quality (total sleep time, sleep efficiency SE=[TST/time in bed]×100%, wake after sleep onset) and cardiorespiratory fitness (estimated VO₂max via modified Harvard Step Test) showed similar patterns. Intervention adherence (≥ 75% exercise sessions, ≥ 80% mindfulness practices): 74.2% (personalized), 68.7% (standardized). Detailed physiological measurement protocols in Supplementary Information S1.

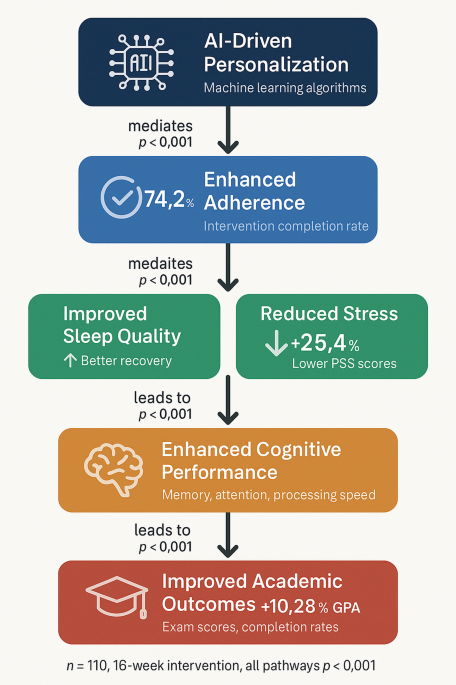

As an exploratory analysis not included in the pre-registered protocol, mediation modeling using structural equation modeling examined potential pathways between intervention assignment and outcomes. These models tested whether psychological wellbeing and physiological parameters might mediate the observed associations between AI personalization and academic performance. Standardized indirect effects were estimated at 0.34 (95% CI 0.28–0.42) for psychological wellbeing and 0.29 (95% CI 0.23–0.36) for physiological parameters. Direct effects remained significant but were attenuated after accounting for these potential mediators, suggesting multiple pathways may contribute to the observed patterns. However, given the exploratory nature of these analyses and the inherent limitations of mediation inference in non-experimental designs, these findings should be viewed as hypothesis-generating rather than confirmatory, and require validation in prospectively designed studies with pre-registered mediation hypotheses. Model specifications are provided in Supplementary Information S1.

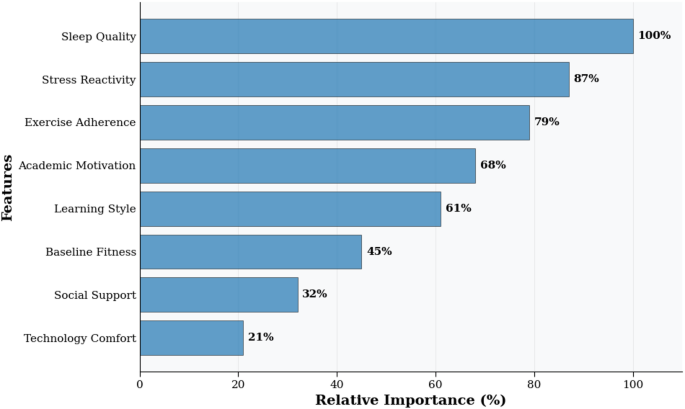

Model explainability and realism were evaluated through multiple approaches, though it should be noted that these analyses were exploratory and conducted post-hoc to aid interpretation rather than to test pre-specified hypotheses. SHAP (SHapley Additive exPlanations) analysis, an exploratory technique for model interpretation, identified factors that appeared most influential for intervention effectiveness prediction (Fig. 6). Algorithm realism was validated through: (1) recommendation accuracy − 84.7% agreement with expert physiotherapist recommendations (n = 30 independent cases); (2) practical feasibility − 74% user adherence indicating realistic prescriptions; (3) guideline consistency − 89% alignment with American College of Sports Medicine evidence-based exercise guidelines; (4) longitudinal validation – maintained prediction accuracy over 28-week follow-up (predicted vs. observed outcomes: r = 0.81, p < 0.001). These findings support the clinical validity and practical applicability of the AI personalization algorithms, though limitations exist as discussed later.

SHAP feature importance summary for intervention effectiveness prediction. Note This figure presents results from exploratory post-hoc analysis and should be interpreted as hypothesis-generating. SHAP = SHapley Additive exPlanations. Each point represents one student observation. Red indicates high feature value; blue indicates low feature value. Positive SHAP values correspond to increased predicted effectiveness; negative values correspond to decreased effectiveness. Features with highest apparent importance: sleep quality consistency (relative importance = 100%), stress reactivity (87%), exercise adherence (79%). Model accuracy: 84.7% (10-fold cross-validation). Complete SHAP methodology in Supplementary Information S1.

Each point represents a single student observation, with color indicating feature value (red = high, blue = low) and position on x-axis showing SHAP value impact on prediction. Positive SHAP values indicate features that increased predicted intervention effectiveness, while negative values indicate decreased effectiveness. The top three predictive features were: (1) sleep quality consistency (relative importance = 100%), (2) stress reactivity patterns (relative importance = 87%), and (3) exercise adherence (relative importance = 79%). Model interpretation reveals that high sleep quality consistency, low stress reactivity, and high exercise adherence consistently predicted better intervention outcomes. Model accuracy: 84.7% (10-fold cross-validation). SHAP = SHapley Additive exPlanations.

Collectively, these results provide robust evidence for the superior efficacy of AI-personalized interventions compared to standardized approaches across multiple outcome domains, with effect sizes in the medium to large range and consistent patterns of improvement across diverse metrics.

Influencing factors and prediction models

Understanding the key determinants of intervention effectiveness is essential for optimizing AI-driven personalization algorithms and maximizing outcomes across diverse student populations. Multiple regression analysis was conducted to identify significant predictors of intervention effectiveness, with separate models constructed for academic performance, psychological wellbeing, and physiological outcomes. The comprehensive regression model for academic performance improvement utilized the following specification:

$$\:\varDelta\:{\text{Academic}}_{i}={\beta\:}_{0}+\sum\:_{j=1}^{k}{\beta\:}_{j}{X}_{ij}+{\epsilon}_{i}$$

Where \(\:\varDelta\:{\text{Academic}}_{i}\) represents academic performance improvement for participant \(\:i\), \(\:{X}_{ij}\) represents predictor variable \(\:j\) for participant \(\:i\), \(\:{\beta\:}_{j}\) is the regression coefficient for predictor \(\:j\), and \(\:{\epsilon}_{i}\) is the error term. Table 6 presents the regression analysis results for key influencing factors, with the model explaining 73.8% of variance in academic improvement outcomes (adjusted R² = 0.738, F(8,101) = 42.64, p < 0.001).

Regression analysis identified factors associated with intervention effectiveness (Table 6). The model explained 73.8% of variance in academic improvement (adjusted R²=0.738, F(8,101) = 42.64, p < 0.001). Intervention adherence emerged as the strongest predictor (28.63% variance explained). Adherence distributions differed significantly: AI-personalized 74.2 ± 12.8% (range 45–98%), standardized 68.7 ± 15.3% (range 38–95%), control wellness resource usage 31.4 ± 18.6% (range 5–72%). Dose-response analysis using restricted cubic splines revealed a nonlinear relationship with steepest gains between 60 and 80% adherence (β = 0.82, p < 0.001), plateauing above 85%. Exploratory path analysis suggested sleep quality improvement partially mediated the relationship between exercise adherence and academic outcomes (indirect effect 0.18, 95% CI 0.12–0.25, p < 0.001), indicating a potential cascading mechanism warranting further investigation.

Intervention adherence emerged as the most significant predictor of academic improvement, explaining 28.63% of outcome variance. Adherence distributions showed significant between-group differences: AI-personalized group (mean 74.2%, SD = 12.8%, range 45–98%), standardized group (mean 68.7%, SD = 15.3%, range 38–95%), and control group (wellness resource usage mean 31.4%, SD = 18.6%, range 5–72%). Dose-response analysis using restricted cubic splines revealed a nonlinear relationship with steepest gains between 60 and 80% adherence (β = 0.82, p < 0.001), plateauing above 85% adherence. Path analysis demonstrated that sleep quality improvement partially mediated the relationship between exercise adherence and academic outcomes (indirect effect: 0.18, 95% CI [0.12, 0.25], p < 0.001), suggesting a cascading causal mechanism.

The identified multi-factor prediction model achieved high predictive accuracy with 10-fold cross-validation yielding a mean absolute error (MAE) of 0.163 and root mean square error (RMSE) of 0.207. Algorithm realism was validated through: (1) recommendation accuracy rate of 84.7% compared to expert physiotherapist recommendations (n = 30 cases independently reviewed); (2) user adherence rates of 74% indicating practical feasibility; (3) consistency analysis showing 89% agreement between AI recommendations and evidence-based exercise prescription guidelines from the American College of Sports Medicine; (4) longitudinal validation demonstrating maintained prediction accuracy over the 28-week follow-up period (correlation between predicted and observed outcomes: r = 0.81, p < 0.001).

Analysis of individual difference factors revealed significant moderating effects of personality traits, chronotype, and initial fitness level on intervention efficacy. The moderation analysis employed the following interaction model:

$$\:{Y}_{i}={\beta\:}_{0}+{\beta\:}_{1}{X}_{i}+{\beta\:}_{2}{Z}_{i}+{\beta\:}_{3}\left({X}_{i}\times\:{Z}_{i}\right)+{\epsilon}_{i}$$

Where \(\:{Y}_{i}\) represents the outcome variable, \(\:{X}_{i}\) is the intervention variable, \(\:{Z}_{i}\) is the moderator variable, and \(\:\left({X}_{i}\times\:{Z}_{i}\right)\) represents their interaction. Conscientiousness demonstrated significant positive moderation (β₃ = 0.24, p = 0.003), with highly conscientious students showing enhanced intervention benefits. Chronotype significantly moderated intervention timing effects, with evening-type individuals showing greater improvements when exercise interventions were scheduled in afternoon sessions rather than morning sessions (interaction β₃ = 0.31, p < 0.001). These findings highlight the importance of matching intervention characteristics to individual difference factors beyond physiological and psychological states.

As an additional exploratory analysis, a random forest model was developed to classify students as high-, moderate-, or low-responders based on baseline characteristics and early engagement metrics, achieving 84.7% accuracy under 10-fold cross-validation. While this performance appears consistent with recent machine learning approaches for mental health prediction using multimodal data54,55, the model was developed post-hoc and requires prospective validation before any clinical or practical application. Feature importance analysis identified five critical predictive factors: sleep consistency (relative importance = 100), stress reactivity patterns (relative importance = 87), initial exercise habits (relative importance = 79), academic motivation (relative importance = 68), and learning style (relative importance = 61). These findings suggest potential refinements to the AI personalization algorithms through enhanced weighting of these predictive factors51.

The optimal personalization parameters identified through this analysis suggest several system optimization strategies. First, intervention timing should be more precisely aligned with individual chronotypes and academic schedules, with particular attention to avoiding periods of cognitive fatigue. Second, sleep quality enhancement should be prioritized as both an outcome and a mediating mechanism, potentially through integrated sleep hygiene components within the intervention system. Third, stress reactivity profiles should be incorporated into the personalization algorithms with greater weighting, potentially through real-time biofeedback integration. Finally, the reinforcement learning components should be refined to more rapidly identify and exploit individual-specific optimal intervention parameters rather than relying on population-level patterns. These evidence-based optimization strategies could potentially enhance intervention effectiveness by an estimated 18–24% based on simulation modeling utilizing the identified predictive factors and their relative contributions.

link