Quality improvement project to transition psychosocial oncology clinical care to a telehealth workflow during the COVID-19 pandemic: a quasi-experimental study | BMC Health Services Research

Quality Improvement Team The authors established a Quality Improvement (QI) team in March 2020 with diverse stakeholders and leadership, including administrators, social workers, psychiatrists, and triage leadership. Our team met regularly in working groups to discuss evaluation and implementation to improve care accessibility during the pandemic. These 30-minute meetings occurred two to three times per week, in addition to impromptu emergency sessions. Each session included evaluations of ongoing processes, assessments of workflow at a broad level, and reviews of current challenges associated with specific platforms. After first-line leadership consensus on the implementation plan, the investigators would meet with department leads for feedback. The authors received Research Ethics Board (REB) exemption and QI approval (21–0272) from the QI Review Committee (QIRC) within UHN. All procedures were approved by and performed according to the guidelines of the QI Review Committee within UHN. All participants that provided feedback, first provided electronic written informed consent to participate in this study.

Analyzing psychosocial oncology workflow

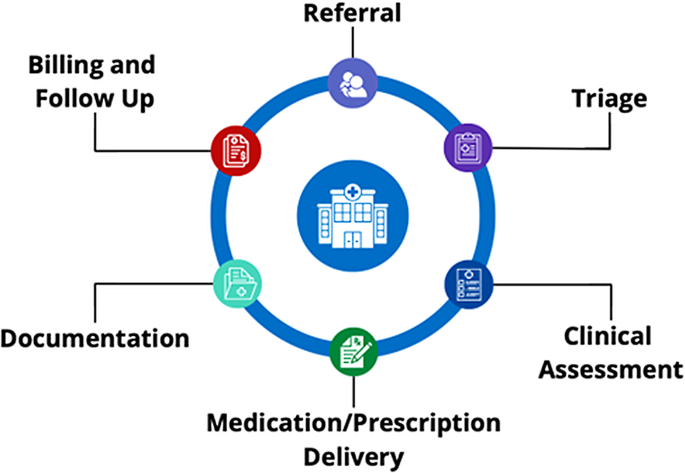

The investigators performed a comprehensive workflow analysis with the different stakeholders using the (Systems Engineering Initiative for Patient Safety) SEIPS 101 methodology to examine key aspects of the PSO workflow [13, 14]. As part of this analysis, the team developed a Journey Map to visualize and sequence major stages of the workflow, including referral, triage, clinical assessment, medication/prescription delivery, documentation, billing, and follow-up communication (Fig. 1).

Psychosocial oncology workflow diagram

Planning and implementing digital transition

Digital technologies were reviewed from credible sources for practical accessibility and protection of health information. We reviewed the capabilities of digital technologies that were available and approved by UHN, as well as compliant with the Health Insurance Portability and Accountability Act (HIPAA), and the Personal Health Information Protection Act (PHIPA). All digital tools were cross-referenced with the national and provincial regulatory bodies including Canadian Medical Protective Association (CMPA) and Ontario Medical Association (OMA).

An assessment of the technologies that can be integrated into the current PSO workflow was completed. The internal assessment of technologies for integration into the PSO workflow was guided by national and provincial regulatory bodies including College of Physicians and Surgeons of Ontario (CPSO), CMPA. This process involved cross-referencing UHN-approved technologies, including Microsoft OneDrive, Microsoft Teams, Adobe PDF Editor, and newly implemented UHN processes, such as eFAX. Ad-hoc evaluations were performed to assess current technologies, their capabilities, and their alignment with PSO departmental needs. This functional analysis aimed to facilitate seamless workflow integration while supporting infection prevention and control protocols. After the QI team reviewed the assessment, the working group selected key quality indicators to measure changes between 2019 and 2021 (Table 1).

For this project, the definition of Telehealth appointment is using Ontario Telemedicine Network (OTN), Microsoft Teams (MST) and telephone platforms for patient encounters. Charting refers to the process of documenting a patient’s medical history, symptoms, diagnoses, treatments, and other relevant healthcare information in a medical record. Triage incidents are defined as unresolved issues or missed referrals. E-prescriptions are PDF prescriptions that were faxed by our organization’s electronic faxing system.

As organizational change can induce uncertainty, anxiety and stress for staff leading to burnout [15] and as healthcare providers were already experiencing burnout before the pandemic [16, 17], our goal was to minimize the stress created by implementing change. 1–2 different technologies were released every 4–6 weeks after smaller pilot testing with a smaller sample group [18]. To further assist with the transition, investigators prepared and disseminated educational materials on digital tools, offered individual support, provided on-demand support to the department and collected feedback from staff. Most importantly, investigators implemented phases to reduce the impact on clinic flow. Plan-Do-Study-Act (PDSA) cycles were used to assess the success of the digital transition [18].

PDSA cycles for digital transition

The first PDSA cycle involved assessing the current PSO workflow to identify gaps in both paper-based and digital tools. A rapid workflow assessment was conducted by two clinical team members (CSQ, RS), followed by a review with clinical and administrative leadership. Using the SEIPS 101 journey map, we identified workflow areas that were misaligned with COVID-19 restrictions and determined digital solutions [13, 14]. The findings were presented to working groups, including administrative and psychiatry clinical teams, for validation. To ensure a smooth transition, a small-scale pilot was implemented with two clinicians who were early adopters of digital tools, minimizing disruption to the PSO team while assessing real-world feasibility. While real-time feedback was collected via group discussions, it was not formally recorded due to the lack of REB or QIRC approval. The process was iteratively adjusted based on this feedback, and workflow processes were refined by incorporating input from clinical, administrative, and technological stakeholders. This led to the identification of tools suitable for broader implementation in subsequent cycles.

In the second PDSA cycle, digital tools were launched solely for the psychiatry team, this team was selected based on clinician volume and the need for comprehensive care. Pilot programs were implemented for clinical digital assessments using OTN, documentation accessibility through OneDrive, and billing/follow-up communications through Outlook. Knowledge mobilization efforts included presentations to engage stakeholders and ensure proper tool usage. Working groups and one-on-one feedback sessions were conducted to assess technical knowledge gaps and usability challenges, though the findings were not formally recorded due to lack of REB and QIRC approval. Based on the feedback from clinical, administrative, and technology leaders, adjustments were made to optimize the implementation of the digital tools.

In the third and final PDSA cycle, all remaining digital tools were implemented across the program. We aimed to assess clinician confidence and satisfaction with the implemented technologies to ensure long-term adoption and effectiveness. Digital tools were implemented, including transitioning clinical assessments from OTN to MS Teams, integrating medication and prescription delivery using eFAX and Outlook, and implementing digital referral and triage workflows through Outlook and MS Teams. To support adoption, presentations, drop-in focus groups, and one-on-one training sessions were conducted to address technical concerns and enhance clinician proficiency. A structured evaluation was conducted using Jotform surveys with REB exemption and QIRC approval (21–0272) from our healthcare institution, to gather both quantitative and qualitative data on clinician satisfaction and tool effectiveness. Findings were analyzed and shared within the department, with data used to inform future refinements and enhance digital workflow adoption.

Collection of feedback and data

The team established a data source and measurement method for each digital intervention. During the initial phase of transition, real-time feedback was collected via group discussions that aligned with the PDSA methodology. This included collecting data from national and provincial databases, including eCancercare, local organizational databases, such as Public Health Scheduling System (PHS) and Electronic Patient Records (EPR), departmental databases, such as outlook calendars, and the collection of staff feedback via surveys. All participants and stakeholders who provided feedback gave electronic written informed consent before completing any voluntary survey.

Following the implementation of the digital transition, a survey was completed by psychiatrists, psychologists, social workers, and administration, using an online HIPAA approved platform, JotForm [19]. Each of the four survey instruments were developed for this study to collect data on methods used to complete prescriptions, triage, charting and administrative processes, as well as staff experiences with the digital transition (Appendix G). These surveys were self-designed and were not piloted prior to implementation due to the impromptu nature of the project, which introduces certain limitations, including potential biases and challenges in ensuring reliability and validity. Data was collected in December 2021, following the acquisition of REB exemption and QIRC approval. Since the data was collected retroactively, categorical ranges were used in surveys instead of discrete data points to mitigate recall bias [20, 21]. As the surveys were administered at the end of 2021 and asked respondents to retrospectively reflect on practices in 2019, 2020, and 2021, they are best interpreted as providing descriptive, role-specific perspectives on practice change over time, rather than precise quantitative measures. Response rates varied across professional roles, with some groups overrepresented and others underrepresented (Appendix H), which may introduce bias and limit generalizability.

Several evidence-based strategies were implemented in this project to optimize stakeholder engagement. Stakeholder engagement provides the project leads with perspectives on topics that may be outside of the project lead or leads’ areas of expertise [22]. Also, higher survey response rates are important as surveys allow us to monitor progress and measure improvement [18]. To maximize response rates, the QI team sent out reminder emails for the surveys and personal emails to those who had not completed the survey.

Analysis

All quantitative data from the databases were analyzed using descriptive statistics to assess trends over time. The data were used to calculate the percentage of interest for various metrics, which were then compared across three years to identify trends. For the percentage of virtual encounters, descriptive statistics were applied to determine whether the benchmark of 90% was achieved. Descriptive statistics were also used to analyze no-show assessment rates, wait times, and catchment area to observe any trends and differences between 2019, 2020, and 2021.

The quantitative analysis of the surveys was conducted using descriptive statistics and trend analysis. Response rates and completion rates were calculated for each individual survey, followed by the determination of average response and completion rates across all four surveys by computing the mean. A survey responder was defined as anyone who submitted a survey, regardless of completion, meaning partially completed surveys were included in the response rate calculation. Descriptive statistics were then employed to compute the average response for each question, enabling the observation of trends over time. Given that response rates were uneven across roles, findings may not fully represent all staff perspectives; nonetheless, the data provide descriptive insight into staff experiences of the digital transition.

Regarding qualitative data, real-time feedback was not recorded during the implementation of the digital tools, as the study was conducted in an impromptu manner without prior REB approval or QIRC approval. As a result, a full qualitative analysis of real-time feedback was not possible. While open-ended questions were included in the retroactive surveys, the feedback received in most surveys was not directly relevant to the digital tool implementation, except for the general survey (Appendix E). The feedback collected from the general survey was not addressed within this project as it was collected retroactively, therefore, it will not be analyzed in depth. However, an overview of the feedback will be provided by summarizing key quotes to provide insight on challenges and successes of the implementation.

link